So now I want to look at Heidegger’s view of AI. It’s different than Kant’s. Kant saw us as in a predetermined world, and we were the viewers of that world. But Heidegger had a different view. He saw us as being thrown into the world, just appearing with everything somewhat predetermined. So to a high degree, we don’t see the world—we interpret it. And we interpret it based on our culture, our language, all of the things that are sort of embedded in us. Almost embedded in us before we know the rules, before we understand why the world is the way it is.

So we grow up into this world, not able to format it, but only to interpret it. Heidegger saw language as being very formative. We can only view the world by using language to categorize things. So in Kant, the world is viewed in terms of certainty, the rules, the laws. Heidegger had a different view. He saw the world as being determined by meaning. And for him, meaning was really derived from being.

So this gets us to an interesting place where Heidegger would view AI not as a smart tool or a digital brain, but as the ultimate manifestation of what he called “Enframing,” or Gestell. This relates back to a previous post where I talked about the self and whether it’s a radio we switch on and switch off, or whether it’s the resonance of a bell—a natural phenomenon. I think Kant would have seen the self as a radio. But Heidegger would have been very wary, very afraid of the effect that technology has in creating an idea of something as being something it’s not. So he would not have seen the self as a radio, something to be switched on and off. He would have seen it as a more natural process.

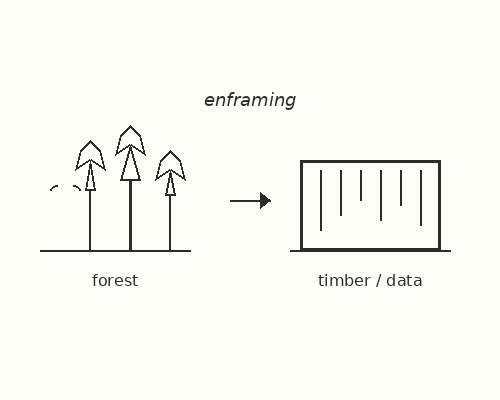

He had a way of explaining this. Before technology, you would look at a forest and you would see a beautiful forest—the trees and the birds and everything. And then after the technological revolution, you would look at the same forest and what you’d see is timber, a resource. So he would have a much more negative view on technology, and probably particularly AI.

If he looked at AI, he would see that you’re not a person or a mystery to technology. You’re really a data point, a user profile, a training set. So to Heidegger’s way of thinking, the world then becomes a giant warehouse of information to be harvested, to be optimized, to be processed. Heidegger would say AI enframes reality. It puts everything into a digital box where only what can be measured or predicted is considered real. The things that can’t be measured, the things that can’t be predicted, the artistic things in life—those are outside that box and they’re not real. They don’t exist to AI. They don’t exist to technology.

No one sees the beauty of a forest. No one sees that ephemeral thing. They just see the resources. Greenland becomes natural resources. No one sees what it really is and what’s really important about it.

So to Heidegger, knowledge isn’t something we discover. He saw knowledge as something that dawns on us, something that appears to us through art, through poetry, through deep contemplation. And this, of course, is completely opposite to what one would expect from AI. AI is going to calculate everything. It’s going to try to eliminate the unknown, to reduce the error.

In Heidegger’s view, if you wanted to write a letter or an email to a friend, you need to place yourself in a state of being. You need to reflect on the being of the person that you are contacting. And in doing that, for Heidegger, you were being part of that process. He didn’t see it as AI would—where AI produces a standard letter and sends it to a standard person receiving it. There was no dwelling in the moment. There was no appreciation of the feel of the moment. There was no appreciation of the art.

Before, I’ve described AI as a sort of mirror that reflects back without a source. I used the analogy of impedance from a power supply into a load. The load doesn’t match, so it reflects the power. And I think Heidegger would have agreed with the mirror analogy, but he would have had a twist. He would have warned that as technology advances, man just encounters himself. We think we’re exploring the world via the internet and AI, but we’re actually just trapped in a loop of human-generated data. And it’s data—it’s not experience in the deeper sense. It’s experience reduced down to a set of points. It’s experience with all of the mystery removed, all of the uncertainty removed, all of the magic in many ways removed.

So when we talk to AI, we’re just looking at a digital reflection of an average human being. I have to say, a very intelligent human being and a very well-informed human being, but still the average of a human being. It’s like, in Heidegger’s view, we’re becoming entrapped in our own enframing, as he would call it.

So I suppose to sum it up, Kant would have asked the question, as an awful lot of people are asking in a Kantian way: Can AI reason? In other words, is AI intelligent? Is it conscious? Can AI do this? Can AI do that? But I think Heidegger would have approached it from a very different perspective. Heidegger would ask: Does AI allow us to be better? Does AI allow us to truly be ourselves? And that would be his framing of it. And I think his answer would be no, it doesn’t.

Heidegger believed that the final stage of Western metaphysics, the way of the Western world, Western thinking, would be an attempt to turn the whole world into one giant controllable machine. So he saw AI not as helping us to be who we are. He saw it as making us become what it is. And by using AI, we are losing our unique way of being. He had a word for it in German—Dasein—and we’re becoming just another component of the system.

And the system wants us to be components. And to do that, it wants to make us predictable. And by making us predictable, it makes us replaceable. And once we’re replaceable, then we’re not real people.

So his advice to people would be: remain authentic, turn to art, to craft, to things that are not easily replaceable. And he saw that’s where the real value for the human being lies.

Thanks, you got this far, try a bit of Kantian resistance and ask a question or make a responce, I promise I am not a mirror or a zero impedance load.

Leave a Reply